PipecatでAIコンパニオンを作成する方法

AIコンパニオンアプリは、2025年にApple App StoreとGoogle Play Storeで世界全体のダウンロード数が約2億2,000万件に達し、前年比で88%の増加を記録しました。日々新しいAIコンパニオンが登場し、その利用に関する議論や論争が絶えない中、この急成長する分野を無視することは困難です。ユーザーが求めているのが、話し相手、友人、あるいは会話の練習相手であれ、AIコンパニオンは、現在利用可能な多くの最先端ツールを組み合わせたフロンティアテクノロジーの新しい分野を形成しています。生成ビデオ、生成テキスト、生成音声が融合することで、実在感のあるコンパニオンを作成する機会が生まれています。

AIコンパニオンの声

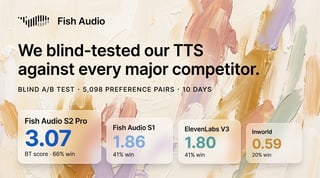

AIコンパニオンにおいて最も重要な側面の一つは、その「声」です。コンパニオンの性格、キャラクター、アイデンティティの本質を凝縮したAIコンパニオンの声は、彼らが何者であるかを伝える上で不可欠です。ユーザーに最高の体験を提供するには最高品質のオーディオが必要であり、さらに、ライブチャットや通話のためのリアルタイムストリーミング、感情の制御、カスタマイズ性などの機能も求められます。

Pipecat

音声通話でチャットするリアルタイムAIコンパニオンを開発している開発者にとって、Pipecatは最適な選択肢です。Pipecatは、親会社であるDailyのDaily rooms製品を通じて、音声によるライブストリーミングチャットを作成するための開発者プラットフォームとSDKを提供しています。Pipecatは、AIコンパニオンとの間での情報のストリーミングインフラを支え、音声文字変換(STT)、LLM、テキスト読み上げ(TTS)といった構成要素を統合します。Pipecatは、ユーザーとAIコンパニオンが接続する環境としてDaily roomsを使用します。さらに、PipecatはFish Audioなどのテキスト読み上げプロバイダーとの多くの統合機能を提供しています。Fish Audioの表現力豊かな音声を使用するには、Fish Audioクライアントを入れ替えるだけで簡単に行えます。

Pipecatの始め方

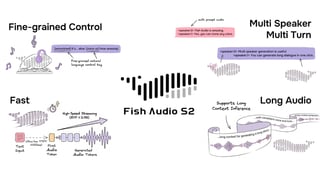

Pythonの場合、Pipecatの FishTTSService は、Fish AudioのWebSocketベースのストリーミングAPIを通じて、リアルタイムのテキスト読み上げ合成を提供します。

必要な依存関係をインストールしてください: pip install “pipecat-ai[fish]” その後、Fish Audioのアカウントを設定します。

まずFish Audioにサインインします。デフォルトの音声を使用するか、自分の声をクローンするか、ライブラリから音声を選択できます。Fish Audioの音声クローンは、豊かな感情表現と特徴を捉えるトップクラスのAIボイスクローナーです。クローンを作成するには、対象の声を少なくとも10秒間録音する必要があります。より早く始めたい場合は、Discoveryページでコミュニティが作成した音声を見つけることもできます。音声が決まったら、APIコンソールからAPIキーを取得し、環境変数 FISH_API_KEY として設定すれば、Fish AudioをPipecatに統合する準備は完了です!

テキスト読み上げ(TTS)サービス

Fish Audioの準備ができたら、TTSサービスを作成し、それをPipecatのパイプラインに配置する必要があります。テキストを受け取り、オーディオフレームを生成するために、正しく配置する必要があります。詳細はPipecatの公式ドキュメント(こちら)をご覧ください。

これで完了です!TTSサービスがLLMのテキストチャンクまたは直接の音声リクエストを取り込み、オーディオフレームを出力するようになれば、あなたのAIコンパニオンはFish Audioの声を使ってユーザーと会話できるようになります。さまざまな声を試したり、Fish Audioがサポートする感情タグを生成するようにLLMにシステムプロンプトを出したり、さらには複数のAIコンパニオンを組み合わせて複雑な対話を作成したりすることも可能です。

James is a legendary machine learning engineer working across infrastructure and automation. Find him fiddling with 67 software and hardware systems at twango.dev since 2006.

James Dingの他の記事を読む