Open-source LLM inference engines compared: SGLang, vLLM, MAX, and BentoML 2026

As AI models move from research to production, the inference engine you choose determines your latency, throughput, and infrastructure cost. The open-source ecosystem has consolidated around three serious contenders — each with a distinct architectural philosophy and set of trade-offs.

This post breaks down SGLang, vLLM, and MAX (Modular) — the three engines that matter most heading into late 2026. We cover what each does, where it shines, where it doesn't, and how they compare head-to-head.

SGLang

GitHub: sgl-project/sglang (~25K stars) · License: Apache 2.0 · Latest: v0.5.9 (Feb 2026)

Description

SGLang (Structured Generation Language) is a high-performance serving framework for LLMs and multimodal models, originally developed at UC Berkeley's Sky Computing Lab by the LMSYS.org team. In January 2026, the SGLang project spun out as RadixArk, a commercial startup valued at ~$400M in an Accel-led round — with angel investment from Intel CEO Lip-Bu Tan. Co-founder and CEO Ying Sheng previously served as a research scientist at xAI.

SGLang's core innovation is RadixAttention, which uses a radix tree data structure for automatic, fine-grained KV cache reuse. This makes it exceptionally fast for multi-turn conversations, RAG pipelines, and any workload with shared prefixes. Its structured output engine (xgrammar backend) is the fastest available in open source, delivering up to 10× faster JSON decoding than alternatives.

SGLang now runs on 400,000+ GPUs worldwide and generates trillions of tokens daily, with notable production users including xAI (as its default LLM engine), AMD, NVIDIA, LinkedIn, and Cursor.

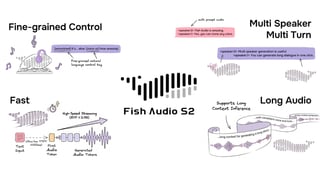

Fish Audio S2 & SGLang: Fish Audio's S2 model — a 4B-parameter Dual-Autoregressive TTS architecture trained on 10M+ hours of multilingual audio — is structurally isomorphic to standard autoregressive LLMs. This means it natively inherits all SGLang optimizations: continuous batching, paged KV cache, CUDA graph replay, and RadixAttention. For voice cloning workloads, RadixAttention caches reference audio KV states, achieving an average 86.4% prefix-cache hit rate — a massive efficiency gain for production TTS serving. Fish Audio open-sourced S2 with first-class SGLang support.

Pros

- Best-in-class throughput — ~29% faster than vLLM on batch throughput benchmarks (H100, Llama 3.1 8B, ShareGPT 1K prompts: ~16,200 tok/s vs ~12,500 tok/s)

- RadixAttention delivers 10–20% speedup on multi-turn chat and up to 6.4× on prefix-heavy RAG workloads

- Fastest structured output — xgrammar backend is 3–10× faster than alternatives for constrained JSON/grammar decoding

- Broad modality support — 60+ LLM families, 30+ multimodal models, embedding/reward models, diffusion models (image & video, up to 5× faster), and TTS (Fish Audio S2)

- Strong RL integration — Miles framework (by RadixArk) for reinforcement learning training loops

- Wide hardware support — NVIDIA (GB200 → RTX 4090), AMD MI300X/MI355, Google TPU (via SGLang-Jax), Intel Xeon, Ascend NPU, Apple Silicon (MLX)

- Active release cadence — ~3-week release cycle, fast to support new models (first to run DeepSeek R1 at scale with P/D disaggregation on 96 H100s)

Cons

- Smaller community — ~25K GitHub stars vs vLLM's ~75K; fewer third-party integrations and tutorials

- Linux-only — requires WSL on Windows; no native macOS GPU serving

- Python GIL bottleneck — request router hits scaling limits above ~150 concurrent requests

- Limited GGUF support — not ideal for quantized edge deployment compared to llama.cpp

- Stability — occasional issues with release candidate dependencies; less battle-tested at the extreme tail of enterprise edge cases

vLLM

GitHub: vllm-project/vllm (~75K stars) · License: Apache 2.0 · Latest: v0.19.0 (Apr 2026)

Description

vLLM is the most widely adopted open-source LLM serving engine and the de facto industry standard. It powers production systems at Amazon (Rufus, serving 250M customers), LinkedIn, Roblox (4B tokens/week), Meta, Mistral AI, IBM, and Stripe (which reported 73% inference cost reduction). The team behind vLLM formed Inferact, raising $150M in January 2026 to commercialize the project.

vLLM's foundational innovation is PagedAttention, which borrows from OS virtual memory management to split KV caches into non-contiguous blocks, reducing GPU memory waste by up to 80%. The V1 architecture rewrite (default since v0.8.0, fully replacing V0 by Q3 2025) restructured the engine into a multi-process architecture with isolated scheduler, engine core, and GPU workers communicating via ZeroMQ — delivering up to 1.7× higher throughput than the original design.

vLLM has the broadest model and hardware support of any engine: text LLMs (Llama 3/4, Qwen 3, DeepSeek V3, Gemma 4, GPT-OSS), vision-language models (InternVL, Qwen2.5-VL, Pixtral), audio models (Qwen3-ASR/Omni), and embedding models. The separate vLLM-Omni project extends support to diffusion and TTS models. Hardware spans NVIDIA, AMD ROCm, Intel XPU/Gaudi, Google TPU, AWS Trainium, ARM CPUs, and IBM Z mainframes.

Pros

- Industry standard — ~75K GitHub stars, 200+ contributors per release, largest ecosystem of tutorials, guides, and integrations

- Broadest compatibility — more supported model architectures and hardware backends than any other engine

- Production-proven — battle-tested at massive scale (Amazon, Roblox, Stripe, Meta)

- V1 architecture — zero-config optimizations, automatic prefix caching, unified chunked prefill; v0.16.0 added async scheduling with 30.8% throughput improvement

- OpenAI-compatible API — drop-in replacement for OpenAI endpoints

- Strong Kubernetes story — official Production Stack + llm-d project (Red Hat, Google Cloud, IBM, NVIDIA) for disaggregated serving

- Scales at extreme concurrency — C++ routing handles 150+ concurrent requests better than Python-based alternatives

Cons

- ~29% slower throughput than SGLang on batch benchmarks with shared-prefix workloads

- Less efficient prefix caching — PagedAttention lacks SGLang's automatic radix-tree-based prefix reuse

- Rapid development pace — occasionally outpaces stability; V1 migration removed some features (best_of, per-request logits processors)

- GPU-focused — limited CPU fallback performance

- Structured output — slower than SGLang's xgrammar for constrained decoding

MAX (Modular)

GitHub: modular/modular (~25.6K stars) · License: Apache 2.0 + LLVM Exceptions (open-source kernels, stdlib, model architectures, serving library); Modular Community License (compiler binary) · Latest: v26.2 (Mar 2026) · Website: Modular

Description

MAX takes a fundamentally different approach from vLLM and SGLang. Where other engines build on top of CUDA libraries (cuBLAS, cuDNN, FlashAttention, FlashInfer), MAX is the only fully vertically integrated inference stack built without CUDA dependency — from GPU kernels (Mojo) to model serving (MAX Serve) to cluster orchestration (BentoML + Modular Cloud), the entire inference pipeline is built from the ground up on MLIR, with no reliance on hardware-specific libraries.

Note: MAX as a platform is broader than a serving engine — it includes a PyTorch-like model development API (

model.compile(), eager mode) more comparable to PyTorch itself. MAX Serve is the inference serving component that directly competes with vLLM and SGLang. For simplicity, this post compares them under the "MAX" umbrella, as end users typically interact with the full stack.

MAX is built by Modular AI — co-founded in 2022 by Chris Lattner (creator of LLVM, Clang, Swift, and MLIR) and Tim Davis (co-creator of TensorFlow Lite, scaled on-device ML to billions of devices at Google) — with 1.6B valuation. Mojo, Modular's systems programming language built on MLIR, enables hardware-agnostic kernels that target NVIDIA, AMD, Apple Silicon, and CPU from a single codebase, with Docker images under 700MB.

Modular has open-sourced over 750,000 lines of Mojo code under Apache 2.0 with LLVM Exceptions, including production-grade GPU kernels, the full standard library, model architectures, and the MAX serving library. The Mojo compiler itself is committed to be open-sourced in 2026 alongside the Mojo 1.0 release. In February 2026, Modular acquired BentoML (the open-source model deployment framework used by 10,000+ organizations), extending the stack with production deployment and cloud orchestration.

MAX supports 500+ models from Hugging Face, including text, vision-language (Qwen2.5-VL, Kimi VL, Gemma 3/4), and image generation (FLUX).

Pros

- Only inference stack built entirely without CUDA — Mojo kernels replace cuBLAS, cuDNN, and FlashAttention with a single portable codebase; matmul kernels have hit 1,772 TFLOPS on B200, exceeding cuBLAS

- Competitive or superior throughput — on NVIDIA L40 with Qwen3-8B: MAX completed 500 prompts in 50.6s vs SGLang's 54.2s and vLLM's 58.9s (16% faster than vLLM); on Vast.ai with Llama 3.1 8B: 89.9 tok/s vs vLLM's 75.9 (18% faster) with nearly half the TTFT

- Tightest tail latency — p99 TTFT of 13.1ms vs vLLM's 23.6ms on L40 benchmarks

- Hardware-portable — Mojo kernels compile to NVIDIA, AMD, Apple Silicon, and CPU from one codebase; no need to maintain separate CUDA/ROCm implementations

- Smallest container footprint — Docker images under 700MB, significantly lighter than vLLM or SGLang

- State-of-the-art image generation — MAX natively serves diffusion models (FLUX.2, SDXL) alongside LLMs in the same container and API, with 4.1× faster inference than torch.compile on B200

- Custom kernel development — PyTorch-like eager mode with

model.compile()for writing custom Mojo kernels, with full open-source kernel implementations as reference - Deep open-source compiler roots — led by Chris Lattner, creator of LLVM (which vLLM was named after); the same community-driven approach that made LLVM the industry standard is now being applied to MAX and Mojo

- $380M funding — well-capitalized with long runway and strong engineering team (337 employees)

Cons

- Hardware-dependent performance — excels on NVIDIA B200 and AMD MI355X, but performance varies across GPU generations; not universally fastest on every hardware target

- Mojo compiler not yet open-source — committed to open-sourcing in 2026 alongside Mojo 1.0; the standard library, kernels, model architectures, and serving library are already open source (750K+ lines)

- Younger ecosystem — less battle-testing in production than vLLM; fewer community-maintained model implementations

- Fewer supported architectures — 500+ models is impressive but still narrower than vLLM/SGLang for cutting-edge or niche models

- Mojo learning curve for kernel development — Mojo is designed as a Python superset for ease of adoption, but advanced GPU kernel development still requires learning new concepts

- Disaggregated inference and orchestration not in open source — features like disaggregated prefill/decode, KV-cache-aware routing, multi-model orchestration, and autoscaling across mixed GPU fleets are available through Modular Cloud, not in the open-source self-hosted Community Edition

Head-to-Head Comparison

| Feature | SGLang | vLLM | MAX (Modular) |

|---|---|---|---|

| GitHub Stars | ~25,000 | ~75,000 | ~25,600 |

| License | Apache 2.0 | Apache 2.0 | Apache 2.0 + LLVM Exc. (kernels/stdlib/serving); Modular Community License (compiler) |

| Commercial Entity | RadixArk ($400M val.) | Inferact ($150M raise) | Modular AI ($1.6B val.) |

| Core Innovation | RadixAttention (radix tree KV cache) | PagedAttention (virtual memory KV cache) | Full-stack MLIR compiler, no CUDA dependency |

| Batch Throughput (H100, Llama 3.1 8B) | ~16,200 tok/s | ~12,500 tok/s | Competitive (hardware-dependent) |

| Multi-Turn / Prefix Reuse | Best (10–20% gain, up to 6.4×) | Good (automatic since V1) | Good |

| Structured Output Speed | Fastest (xgrammar, 3–10×) | Standard | Standard |

| p99 TTFT (L40, Qwen3-8B) | ~18ms | ~23.6ms | ~13.1ms (best) |

| Concurrent Request Scaling | GIL-limited above ~150 | Best (C++ routing) | Good |

| Model Support | 60+ LLM families, 30+ multimodal, diffusion, TTS | Broadest (text, vision, audio, embedding, omni) | 500+ HuggingFace models |

| Hardware Support | NVIDIA, AMD, TPU, Intel, Ascend, Apple Silicon | NVIDIA, AMD, Intel, TPU, Trainium, ARM, IBM Z | NVIDIA, AMD, Apple Silicon, CPU |

| Kubernetes / Deployment | Community-driven | Production Stack + llm-d | Mammoth + BentoML |

| Container Size | ~5–8 GB | ~5–8 GB | <700 MB |

| Custom Kernel Dev | FlashInfer extensions | C++/CUDA extensions | Mojo (PyTorch-like ergonomics) |

| Diffusion Model Support | Yes (SGLang-Diffusion, Nov 2025) | Yes (vLLM-Omni, Nov 2025) | Yes (FLUX, 4.1× faster than torch.compile) |

| TTS / Audio Serving | Yes (Fish Audio S2) | Yes (vLLM-Omni, Fish Speech) | Limited |

| RL Training Integration | Yes (Miles by RadixArk) | No | No |

| Speculative Decoding | Yes | Yes (Roblox: 50% latency reduction) | Yes |

| Disaggregated Prefill/Decode | Yes (production on 96 H100s) | Yes (llm-d project) | Yes (Modular Cloud only) |

When to Use What

Choose SGLang if you're optimizing for multi-turn chatbots, RAG pipelines, structured JSON output, or TTS serving (especially with Fish Audio S2). SGLang's RadixAttention and xgrammar backend offer measurable performance advantages in these workloads, and RadixArk's commercial backing ensures long-term support.

Choose vLLM if you need the safest, most production-proven option with the broadest model and hardware compatibility. vLLM's 75K-star community, enterprise adoption (Amazon, Roblox, Stripe), and comprehensive Kubernetes support make it the lowest-risk choice for general-purpose LLM serving at scale.

Choose MAX if you're running multi-hardware environments (NVIDIA + AMD + CPU), care about container footprint and operational simplicity, or want to invest in custom kernel development with Mojo. MAX's compiler-driven approach offers unique flexibility, and the BentoML acquisition gives it the most complete deployment platform of the three.

What's Shaping Inference in 2026

Three trends are reshaping the competitive landscape:

Disaggregated prefill/decode has moved from experimental to standard. SGLang demonstrated production-scale P/D on 96 H100s for DeepSeek; vLLM's llm-d project (Red Hat, Google Cloud, IBM, NVIDIA) pushes Kubernetes-native disaggregation; and NVIDIA's Dynamo orchestrator integrates with all major engines.

Multi-modal serving is expanding rapidly. vLLM-Omni and SGLang-Diffusion both launched in late 2025, supporting diffusion models and TTS alongside traditional LLMs. The line between "LLM engine" and "general model server" is blurring.

Commercial consolidation is accelerating. RadixArk (150M raise for vLLM), and Modular ($1.6B valuation + BentoML acquisition) all confirm that open-source inference has entered its enterprise monetization phase. HuggingFace TGI has entered maintenance mode — leaving SGLang, vLLM, and MAX as the three primary open-source inference engines heading into late 2026.

Sabrina is part of Fish Audio's support and marketing team, helping users get the most out of AI voice products while turning launches, updates, and customer insights into clear, practical content.

اقرأ المزيد من Sabrina Shu