La mejor API de Texto a Voz para chatbots y asistentes de voz en 2026

La versión demo de tu asistente de voz suena natural. Ejecutas las mismas 10 frases de prueba cada vez que evalúas una nueva API de TTS, las respuestas son limpias y la voz se siente casi humana. Luego, lo pones frente a usuarios reales. Al tercer intercambio, algo falla. La pausa antes de cada respuesta se ha extendido a 900 ms. La voz que sonaba expresiva de forma aislada ahora suena plana en la quinta respuesta consecutiva. Los usuarios están tolerando la voz en lugar de hablar con ella.

La evaluación de TTS para chatbots y asistentes de voz es sistemáticamente optimista porque las condiciones que rompen estos productos —una interacción sostenida de múltiples turnos bajo una carga de red real— son más difíciles de simular que una prueba de calidad de una sola solicitud.

Lo que las demos de un solo turno no miden

Hay tres factores que determinan si una API de TTS funciona para la IA conversacional, y ninguno de ellos está bien representado en un clip de 10 segundos:

Latencia en la alternancia de turnos bajo carga. Un asistente de voz se siente receptivo cuando la pausa entre la entrada del usuario y la respuesta de voz es inferior a 400 ms. La mayoría de las API de TTS logran eso en un entorno de prueba con poca carga. La pregunta es qué sucede cuando 200 usuarios están simultáneamente en conversaciones activas. Los picos de latencia por concurrencia son la principal queja en los despliegues de asistentes de voz en producción.

El umbral de percepción humana para una respuesta conversacional es de aproximadamente 400-500 ms. Pasado ese tiempo, los usuarios comienzan a llenar el silencio con el habla, creando interferencias. Esto no es una preferencia de UX, es un límite fisiológico. Cuando realizamos una prueba de carga con 50 conversaciones concurrentes simuladas en una plataforma de nivel medio, el TTFB saltó de 180 ms a 2.8 segundos. El asistente de voz pasó de ser receptivo a estar roto sin previo aviso, y nada en la documentación del proveedor mencionaba que el perfil de latencia cambiaría tan drásticamente bajo carga concurrente.

Consistencia de voz multi-turno. Algunos modelos de TTS producen una prosodia ligeramente diferente para el mismo texto en llamadas repetidas. En una interacción de un solo turno, nadie lo nota. En una conversación de 10 turnos, la voz acumula inconsistencias sutiles que la hacen sonar menos como un personaje coherente y más como un sistema generando respuestas.

Este problema tiene un nombre en los equipos de producción: colapso de la identidad (persona collapse). Nos topamos con esto al probar una popular API de TTS para un chatbot de servicio al cliente. Para el sexto turno de la conversación, la voz originalmente cálida del servicio al cliente se había convertido en algo que sonaba como un presentador de noticias que acababa de despertarse. La calidez había desaparecido. El ritmo estaba mal. La voz que se sentía intencional en las pruebas se sentía arbitraria en el uso. Finalmente resolvimos el problema de la deriva multi-turno en Fish Audio ajustando parámetros específicos, pero el hecho de que tuviéramos que dedicar tiempo a ello no figuraba en ninguna documentación.

Rango emocional en diferentes tipos de respuesta. Una IA conversacional maneja saludos, explicaciones, correcciones y disculpas. La voz del TTS debe modularse adecuadamente en todos ellos, no solo sonar bien leyendo una declaración neutral.

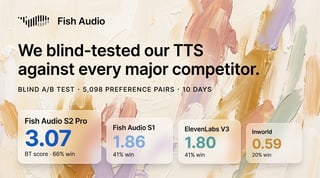

Comparativa de API de TTS para IA conversacional

| Plataforma | TTFB | Streaming | Consistencia Multi-turno | Clonación de Voz | Idiomas | Sesiones Concurrentes |

|---|---|---|---|---|---|---|

| Fish Audio | Nivel de milisegundos | Sí | Alta | Sí (muestra de 15 seg) | 30+ | Alta |

| ElevenLabs | Competitivo | Sí | Alta | Sí | 30+ | Moderada |

| Azure TTS | Moderado | Nivel Enterprise | Alta | Limitada | 100+ | Enterprise |

| Google TTS | Moderado | Limitado | Alta | No | 40+ | Alta |

| Amazon Polly | Moderado | Sí | Alta | No | 20+ | Alta |

Fish Audio: Latencia y consistencia para conversaciones multi-turno

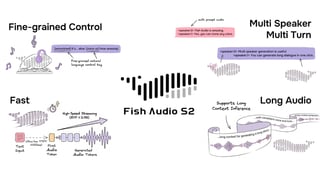

Los dos requisitos que determinan más directamente la calidad de un asistente de voz son el TTFB y el soporte de streaming. El tiempo hasta el primer byte (TTFB) de nivel de milisegundos de Fish Audio, combinado con la entrega por streaming, significa que los usuarios escuchan la voz comenzando en 150-200 ms en una conexión normal. Eso está dentro del umbral donde la alternancia de turnos se siente natural en lugar de retrasada.

El streaming importa de manera diferente para la IA conversacional que para el TTS de contenido. Para un asistente de voz, las primeras palabras de una respuesta tienen el mayor peso semántico: "Sí, puedo ayudar con eso" frente a "Lo siento, eso no es algo que pueda hacer". Con el streaming, esas primeras palabras llegan en menos de 200 ms. El usuario comprende la dirección de la respuesta antes de que se haya generado la oración completa. Eso es cualitativamente diferente a esperar 800 ms a que el audio completo esté listo antes de que se reproduzca algo.

La arquitectura que hace que esto funcione es conectar el flujo de salida del LLM directamente al flujo de entrada del TTS. En lugar de esperar a que el modelo de lenguaje termine su respuesta completa, se envían fragmentos de texto a Fish Audio a medida que se generan. El pipeline de streaming del LLM y el pipeline de streaming del TTS se ejecutan en paralelo, y la latencia total se reduce a una cifra cercana a la latencia de la etapa que sea más lenta, no a la suma de ambas. Así es como se obtiene una latencia de extremo a extremo inferior a 500 ms en un despliegue conversacional real.

Nota para el desarrollador: No envíes respuestas largas del LLM como una sola llamada de TTS. Divídelas en límites de oraciones naturales y transmítelas como llamadas de TTS más cortas en secuencia. Esto permite comenzar a reproducir el audio antes y ofrece a los usuarios un punto de pausa natural para interrumpir, que es lo que ocurre en las conversaciones reales.

El soporte de alta concurrencia significa que el perfil de latencia que observas durante el desarrollo es el que los usuarios realmente experimentan. El caso documentado de un chatbot conversacional que logra una latencia de extremo a extremo inferior a 500 ms con Fish Audio refleja condiciones del mundo real, no un entorno de benchmark optimizado.

La clonación de voz añade una dimensión que importa específicamente para asistentes de marca e identidades de producto. En lugar de seleccionar de un catálogo de voces genéricas, puedes crear un personaje de voz específico que sea consistente con la identidad de tu producto. El requisito de una muestra de 15 segundos hace que esto sea práctico sin requerir sesiones de grabación profesionales. La voz clonada funciona en los más de 30 idiomas compatibles, por lo que la voz de un solo personaje escala a despliegues internacionales sin necesidad de volver a grabar.

El catálogo de voces de Fish Audio es grande —más de 2,000,000 de voces de la comunidad— y ofrece opciones inmediatas si no deseas clonar. Pero vale la pena señalar que el catálogo tiende hacia ciertos perfiles vocales. Si necesitas un acento regional muy específico o una voz de personaje altamente distintiva, es posible que debas clonar una en lugar de buscarla en el catálogo, lo que añade un paso al proceso de configuración. No es un impedimento, pero es una expectativa realista que hay que tener antes de empezar.

Documentación de la API en docs.fish.audio.

ElevenLabs: El caso de la calidad para asistentes de voz en inglés

Francamente, si estás construyendo una IA de acompañamiento inmersiva en inglés y la voz en sí es el producto, el rango emocional de ElevenLabs sigue siendo el referente. La diferencia entre cómo ElevenLabs y la mayoría de las otras plataformas manejan la vacilación, el énfasis y el subtexto emocional en inglés es audible. No es marginal. Para un producto donde el personaje de la voz es fundamental para la experiencia del usuario —una aplicación de acompañamiento, un asistente de narración, una herramienta de apoyo terapéutico— la calidad de salida en inglés de ElevenLabs justifica las concesiones.

Esas concesiones son reales. El modelo de precios por niveles significa que los períodos de alta actividad te empujan a niveles de plan superiores, y para productos con un uso irregular, la facturación se vuelve impredecible. El streaming funciona bien bajo condiciones estándar, pero la concurrencia a escala es donde Fish Audio tiene una ventaja estructural. Para un asistente de voz que maneja exclusivamente inglés y donde el volumen de conversación es predecible, ElevenLabs es la opción más sólida por pura calidad de salida. Para cualquier cosa multilingüe o de alta concurrencia, ese cálculo cambia.

Azure TTS: El camino del despliegue empresarial

La calidad de Azure Neural TTS ha alcanzado un nivel competitivo para aplicaciones conversacionales. La confiabilidad y el SLA empresarial lo convierten en la opción predeterminada para organizaciones que ya operan con la infraestructura de Azure.

El streaming está disponible, pero normalmente requiere acceso al nivel empresarial. La clonación de voz es compleja de configurar y no está diseñada para el tipo de creación rápida de voz que necesitan los creadores de contenido o los equipos de desarrollo más pequeños. Si tu caso de uso es un sistema IVR empresarial o un bot de servicio al cliente a gran escala con requisitos de voz estables y definidos, Azure funciona bien. Para un desarrollo de IA conversacional más experimental, la sobrecarga de configuración ralentiza la iteración.

Patrones de diseño de voz que mejoran la calidad conversacional

La selección de la plataforma es una palanca. Cómo configures la interacción de voz es otra.

Usa streaming desde la primera respuesta. No esperes a confirmar que el audio completo está disponible. Comienza a reproducir el primer fragmento y almacena el resto en el búfer. La sensación conversacional proviene de un primer audio rápido, no de un audio completo rápido.

Ajusta la selección de voz al registro del caso de uso. La voz de una IA de acompañamiento y la de un bot de servicio al cliente deben sonar diferentes. El perfil emocional importa: más cálido para apps de acompañamiento, más medido para la entrega de información, más animado para aplicaciones de consumo.

Mantén las respuestas individuales cortas. La calidad del TTS por unidad de audio es mayor para frases cortas y completas. Las respuestas largas introducen más oportunidades para la inconsistencia prosódica. Si tu LLM genera una respuesta de 4 oraciones, considera si transmitir estas como 4 llamadas de TTS separadas (y reproducirlas en secuencia) ofrece una mejor calidad de voz que una sola llamada con una entrada de 4 oraciones.

Pre-genera respuestas estáticas. Los saludos, agradecimientos y transiciones ("Déjeme verificar eso por usted") se generan de la misma manera cada vez. Pre-genera estos una vez y sírvelos desde la caché. Eliminas por completo la latencia de la API para las expresiones más frecuentes.

Nota para el desarrollador: Los asistentes de voz necesitan manejo de interrupciones. Si un usuario habla mientras el TTS se está reproduciendo, el audio debe detenerse limpiamente. Implementa esto antes de probar con usuarios reales, no después; la UX de interrupción es el factor número uno que hace que los asistentes de voz se sientan poco naturales.

Emparejando plataforma con tipo de chatbot

IA de acompañamiento y bots sociales: El rango emocional y la naturalidad de la voz importan más que cualquier otra variable. Fish Audio o ElevenLabs. La ventaja de Fish Audio aumenta si necesitas soporte multilingüe o una voz de personaje personalizada.

Bots de servicio al cliente: El soporte multilingüe y la confiabilidad son lo más importante. Fish Audio maneja más de 30 idiomas con una sola API y calidad consistente. La alta concurrencia es vital para aplicaciones de servicio al cliente que experimentan picos de volumen.

IVR y sistemas telefónicos: Los requisitos de latencia son algo más flexibles que los de los asistentes de voz web o de apps. El control SSML para la pronunciación y el ritmo importa más. Azure o Amazon Polly son adecuados específicamente para el canal telefónico.

Asistentes de información (bots de FAQ, bots de conocimiento): La voz debe sonar autoritaria y clara. Una voz neutral y medida de cualquiera de las principales plataformas funciona bien. La latencia y el costo son los principales diferenciadores en este punto.

Preguntas frecuentes

¿Qué latencia de TTS necesito para que un chatbot de voz se sienta natural? Un TTFB (tiempo hasta el primer audio) inferior a 400 ms mantiene una alternancia de turnos conversacional natural. Menos de 200 ms se siente inmediato. Más de 600 ms hace que los usuarios comiencen a hablar antes de que el bot termine, o esperen en un silencio incómodo. El TTFB de nivel de milisegundos de Fish Audio mantiene las respuestas en el rango natural.

¿Puedo crear una voz de marca personalizada para mi asistente de voz? Sí. La clonación de voz de Fish Audio crea una voz de marca a partir de una grabación de 15 segundos, que luego genera toda la salida de TTS en esa voz. El clon funciona en más de 30 idiomas, por lo que una sola voz de marca escala a despliegues internacionales.

¿Funciona el streaming de TTS con pipelines de IA conversacional? Sí, y es la arquitectura recomendada. El streaming desde Fish Audio significa que el usuario escucha el comienzo de una respuesta mientras el resto se sigue generando. Combinado con la generación de texto en streaming de un LLM, la latencia de extremo a extremo desde la entrada del usuario hasta la respuesta audible puede ser inferior a 500 ms.

¿Qué sucede con la calidad del TTS en una conversación larga (más de 10 turnos)? La consistencia de la voz a lo largo de los turnos está determinada por el modelo de TTS, no por la longitud de la conversación. El modelo de Fish Audio produce una prosodia consistente en llamadas repetidas, lo que evita la deriva de la voz que algunas plataformas muestran en sesiones de múltiples turnos.

¿Vale la pena usar la clonación de voz para un chatbot de servicio al cliente? Para chatbots de marca donde la identidad corporativa consistente importa, sí. Una voz clonada que coincida con el estilo de comunicación de tu marca es más efectiva que seleccionar de un catálogo genérico. La muestra mínima de 15 segundos de Fish Audio hace que esto sea práctico sin necesidad de un presupuesto de grabación profesional.

¿Qué API de TTS maneja mejor múltiples conversaciones simultáneas de chatbots? El soporte de alta concurrencia de Fish Audio está diseñado exactamente para esto. El perfil de latencia se mantiene consistente bajo carga concurrente. Azure y Google también manejan bien la alta concurrencia, aunque con diferentes equilibrios entre calidad y funciones.

Conclusión

Para la IA conversacional, la selección de la API de TTS se reduce a dos preguntas: ¿puede entregar el audio lo suficientemente rápido para que la alternancia de turnos se sienta natural, y puede mantener ese rendimiento cuando cientos de conversaciones ocurren simultáneamente?

El TTFB de milisegundos, el soporte de streaming, la alta concurrencia y la clonación de voz de Fish Audio lo convierten en la opción más completa para despliegues conversacionales. ElevenLabs para casos de uso centrados en el inglés donde la voz en sí es parte del producto. Azure y Google para despliegues empresariales o alineados con la infraestructura donde esos ecosistemas ya definen la arquitectura.

Prueba bajo carga concurrente antes de comprometerte. Un asistente de voz que funciona bien con 1 usuario no predice el comportamiento con 500. Detalles de integración y documentación de la API en docs.fish.audio.

Kyle is a Founding Engineer at Fish Audio and UC Berkeley Computer Scientist and Physicist. He builds scalable voice systems and grew Fish into the #1 global AI text-to-speech platform. Outside of startups, he has climbed 1345 trees so far around the Bay Area. Find his irresistibly clouty thoughts on X at @kile_sway.

Leer más de Kyle Cui