أي واجهة برمجة تطبيقات (API) لتحويل النص إلى كلام تتميز بأقل زمن انتقال للتطبيقات في الوقت الفعلي؟

يعمل المساعد الصوتي بشكل جيد على جهاز الكمبيوتر المحمول الخاص بك عبر شبكة WiFi. تقوم بعرضه، فينال إعجاب الناس، ثم تطلقه. ثم يفتحه مستخدم عبر اتصال محمول، وتكون فترة التوقف قبل بدء الصوت 1.8 ثانية. تبدو المحادثة وكأنها مكالمة هاتفية مع تأخير سيئ بسبب القمر الصناعي. فيقومون بإغلاق التطبيق.

لا يُعد زمن الانتقال (Latency) في TTS مجرد مسألة تحسين بسيطة. بالنسبة للتطبيقات في الوقت الفعلي، فهو الفرق بين تجربة تبدو وكأنها محادثة وتجربة تبدو وكأنها إرسال نموذج وانتظار الرد. لن تخبرك أوراق المواصفات بهذا بوضوح؛ حيث يتم قياس معظم أرقام الاختبارات المرجعية على الشبكة المثالية للمورد باتصال تم إعداده مسبقاً.

مفاجأة الإنتاج التي لم أكن مستعداً لها

لقد تعلمت هذا بالطريقة الصعبة في مشروع مساعد صوتي منذ حوالي ثمانية عشر شهراً. أثناء التطوير، كنت أرى باستمرار TTFB في نطاق 180-220 مللي ثانية - وهو أمر مقبول تماماً، وكانت المحادثة تبدو طبيعية، وكنت سعيداً. دخلت أسبوع اختبار الحمل وأنا أشعر بالثقة.

ثم قمنا بمحاكاة 200 مستخدم متزامن. قفزت إحدى واجهات برمجة التطبيقات التي كان أداؤها رائعاً عند 5 جلسات متزامنة من تلك الـ 180 مللي ثانية المريحة إلى 2.3 ثانية. لا يوجد تحذير في المستندات، ولا تدهور تدريجي في الأداء - مجرد طفرات حادة في زمن الانتقال جعلت المساعد يبدو وكأنه يفكر في مسألة رياضية صعبة قبل كل رد. لم نكتشف ذلك إلا قبل أسبوع من الإطلاق، وهو ليس الوقت الذي ترغب فيه في إعادة النظر في مزود TTS الخاص بك.

تضمن الإصلاح التبديل إلى API ذات بنية تزامن أفضل وإعادة بناء جزء من مسار الصوت لاستخدام البث المتقطع عبر بروتوكول HTTP (chunked HTTP streaming) بدلاً من انتظار تسليم الملف بالكامل. هذا وحده - التبديل من انتظار الملف الكامل إلى بث الأجزاء - قلل وقت الاستجابة الأول المتصور من 1.2 ثانية إلى حوالي 450 مللي ثانية. عتبة الـ 450 مللي ثانية هذه هي تقريباً النقطة التي تتوقف عندها الأذن البشرية عن تسجيل الفجوة بوعي كـ "توقف". تحت هذا المستوى، تتدفق المحادثة ببساطة. وفوقه، يبدأ المستخدمون في تعديل سلوكهم.

ملاحظة للمطور: اختبار الأداء على جهاز التطوير الخاص بك لا فائدة منه للتنبؤ بزمن الانتقال في بيئة الإنتاج. اختبر من جهاز افتراضي سحابي في المنطقة التي يتواجد فيها مستخدموك، وتحت حمل متزامن. الفجوة بين "يعمل على جهازي" و"يعمل مع 200 مستخدم متزامن في جنوب شرق آسيا" هي المكان الذي تعيش فيه معظم مفاجآت زمن الانتقال.

300 مللي ثانية، 800 مللي ثانية، ثانيتان: أين تنكسر التجربة فعلياً

ليست كل عتبات زمن الانتقال مهمة بنفس القدر. إليك ما تعنيه الأرقام في الممارسة العملية:

أقل من 150 مللي ثانية: يبدو فورياً. لا يلاحظ المستخدمون فجوة بين مدخلاتهم والاستجابة الصوتية. يمكن تحقيق ذلك من خلال النشر المحلي أو واجهات برمجة تطبيقات البث الاستثنائية.

150-400 مللي ثانية: مقبول للمساعدات الصوتية. يلاحظ المستخدمون توقفاً طفيفاً ولكن إيقاع المحادثة يظل سليماً. تقع معظم واجهات برمجة تطبيقات TTS للبث المحسنة جيداً هنا في الظروف العادية.

400 مللي ثانية - ثانية واحدة: التوقف مسموع وملحوظ. يتكيف المستخدمون من خلال الانتظار لفترة أطول قبل التحدث، مما يقتل تدفق المحادثة الطبيعي. يمكنك التعويض عن ذلك من خلال ملاحظات واجهة المستخدم - مؤشر استماع، أو رسوم متحركة - ولكن لا يمكنك إخفاءه تماماً.

أكثر من ثانية واحدة: ينكسر نموذج المحادثة. يفترض المستخدمون أن النظام قيد المعالجة أو معطل. هذا هو النطاق الذي تبدأ فيه تذاكر الدعم في الوصول.

هناك تمييز مهم تفتقده معظم المقارنات: وقت وصول أول بايت (TTFB) مقابل إجمالي وقت التوليد. قد يستغرق نص مكون من 500 كلمة من 6 إلى 8 ثوانٍ ليتم توليده كملف صوتي كامل. مع البث (Streaming)، يصل أول جزء صوتي في غضون 80-150 مللي ثانية ويتم تشغيله أثناء استمرار التوليد. انتظار المستخدم المتصور هو 80 مللي ثانية، وليس 8 ثوانٍ.

هذا التمييز وحده يحدد ما إذا كانت واجهة برمجة تطبيقات TTS صالحة للاستخدام في الوقت الفعلي أم لا.

زمن الانتقال والبث: مقارنة المنصات

| المنصة | TTFB تقريبي | البث (Streaming) | التزامن | خيار الاستضافة الذاتية | وقت التشغيل |

|---|---|---|---|---|---|

| Fish Audio | على مستوى الملي ثانية | نعم | تزامن عالٍ | نعم (Fish Speech) | 99.9%+ |

| ElevenLabs | تنافسي | نعم | نعم | لا | مرتفع |

| Azure TTS | متوسط | نعم (للمؤسسات) | عالٍ | لا | SLA للمؤسسات |

| Google TTS | متوسط | محدود (أساسي) | عالٍ | لا | مرتفع |

| OpenAI TTS | تنافسي | نعم | متوسط | لا | مرتفع |

تختلف أرقام TTFB حسب المنطقة وجودة الاتصال وما إذا كان الاتصال قد تم إعداده مسبقاً. اختبر في المنطقة التي يتواجد فيها مستخدموك، وليس من جهاز التطوير الخاص بك.

Fish Audio: وقت وصول أول بايت (TTFB) بمستوى الملي ثانية وما يعنيه ذلك في الإنتاج

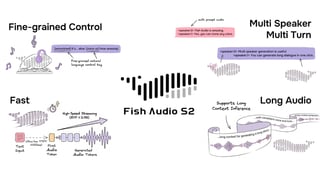

تم تصميم واجهة برمجة تطبيقات Fish Audio للتسليم في الوقت الفعلي. وقت وصول أول بايت هو على مستوى الملي ثانية، مما يجعله في النطاق الذي يبدأ فيه بث الصوت قبل أن يسجل المستخدم الانتظار بوعي.

يعني دعم البث أن مسار الصوت يعمل بنفس الطريقة التي تقدم بها المتصفحات الفيديو: تصل الأجزاء وتعمل أثناء توليدها. بالنسبة للاستجابة التي تستغرق 4 ثوانٍ لتوليدها كملف كامل، يسمع المستخدمون بدء الصوت في أقل من 200 مللي ثانية على اتصال عادي. في بيئة إشارة 4G ضعيفة، رأينا البث المتقطع يضغط وصول البايت الأول الفعلي من 1.2 ثانية إلى حوالي 450 مللي ثانية على نفس واجهة برمجة التطبيقات - فقط من خلال الانتقال من انتظار الملف الكامل إلى استهلاك البث أثناء وصوله.

تعتبر بنية التزامن مهمة عند التوسع. تبدأ معظم واجهات برمجة تطبيقات TTS في تقييد السرعة (throttling) عندما تزداد الطلبات المتزامنة، مما يترجم إلى طفرات في زمن الانتقال أثناء ذروة حركة المرور. يعني دعم التزامن العالي في Fish Audio أن الأداء الذي تقيسه أثناء الاختبار هو الأقرب للأداء الذي ستحصل عليه عندما يتحدث 500 مستخدم مع منتجك في وقت واحد.

أداء زمن الانتقال في Fish Audio قوي، ولكن من الجدير بالذكر بصراحة عامل واحد من واقع الحياة: إنه يتأثر بشكل كبير بالمسافة الجغرافية لمستخدميك. إذا كان معظم مستخدميك في أوروبا ولا تقوم بالتوجيه عبر CDN أو نشر الحافة (edge deployment)، فستضيق ميزة زمن الانتقال مقارنة بمراكز بيانات Azure الأوروبية. اختيار نقطة النهاية الإقليمية وتكوين CDN لا يقل أهمية عن اختيار واجهة برمجة التطبيقات نفسها.

يغير خيار المصدر المفتوح السقف تماماً. يمكن استضافة Fish Speech، النموذج الأساسي، ذاتياً. الاستضافة الذاتية تعني أن زمن الانتقال الوحيد هو وقت الاستدلال (inference time) على أجهزتك، دون أي قفزة في الشبكة إلى واجهة برمجة تطبيقات خارجية. بالنسبة للتطبيقات ذات الأهمية القصوى لزمن الانتقال حيث يهم كل مللي ثانية، فإن هذا هو الخيار الوحيد الذي يتيح لك الحصول على زمن أقل مما يمكن لأي واجهة برمجة تطبيقات سحابية تقديمه. عادةً ما يقل زمن انتقال الاستدلال على وحدة معالجة رسومات (GPU) حديثة عن 100 مللي ثانية للاستجابات القصيرة.

حالة واحدة موثقة: قام مطور بدمج Fish Audio في روبوت دردشة ذكاء اصطناعي محادثي وقام بقياس زمن الانتقال الشامل (end-to-end) بأقل من 500 مللي ثانية باستمرار، بما في ذلك رحلة الشبكة ذهاباً وإياباً، وتوليد TTS، وتسليم الصوت. توجد وثائق واجهة برمجة التطبيقات الكاملة في docs.fish.audio.

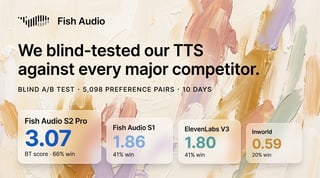

ElevenLabs: منافس حقيقي للغة الإنجليزية

تستحق ElevenLabs الثناء هنا. زمن انتقال البث الخاص بهم للمحتوى الإنجليزي تنافسي حقاً - لقد رأيته يقل عن 200 مللي ثانية TTFB في مناطق شرق الولايات المتحدة، وهو أمر مثير للإعجاب بالنظر إلى جودة النموذج الذي يشغلونه. لقد استثمروا بشكل كبير في تقليل TTFB، وبالنسبة للمحتوى باللغة الإنجليزية، فإن المفاضلة بين الجودة وزمن الانتقال هي من بين الأفضل المتاحة.

القيد بالنسبة للتطبيقات في الوقت الفعلي هو أن ميزة زمن الانتقال تضيق بالنسبة للمحتوى غير الإنجليزي، ولا يوجد خيار استضافة ذاتية إذا كنت بحاجة إلى تقليل الزمن لما هو أقل مما تقدمه واجهة برمجة التطبيقات السحابية الخاصة بهم. عند التزامن العالي، تزداد التكلفة أيضاً بشكل أسرع من نموذج Fish Audio.

خيار قوي للمساعدات الصوتية التي تركز على اللغة الإنجليزية حيث تكون جودة الصوت هي الاهتمام الأساسي. فقط تأكد من الاختبار عند مستويات تزامن واقعية، وليس في ظروف المستخدم الواحد.

Azure TTS و Google TTS: موثوقة، ولكنها غير محسنة للسرعة

تعمل كل من Azure و Google بزمن انتقال متوسط بشكل افتراضي. يتوفر دعم البث في Azure ولكنه يتطلب عادةً الوصول إلى فئة المؤسسات. لا تقدم واجهة برمجة التطبيقات الأساسية من Google بثاً حقيقياً بنفس المعنى الذي تقدمه منصات TTS المبنية خصيصاً للوقت الفعلي.

كلاهما مناسب للتطبيقات التي يكون فيها زمن الانتقال مقبولاً عند 500 مللي ثانية - 1 ثانية. أنظمة IVR، ميزات القراءة بصوت عالٍ في التطبيقات، وأصوات الإشعارات. ليس الخيار الصحيح للذكاء الاصطناعي المحادثي أو المساعدات الصوتية حيث يجب أن تكون الاستجابة فورية.

ملاحظة للمطور: قم بتسخين اتصال TTS API الخاص بك. يتضمن الطلب الأول في جلسة باردة عبء مصافحة TCP - عادةً 30-100 مللي ثانية حسب المسافة الجغرافية - وهو ما لا تدفعه الطلبات اللاحقة. يضيف حل DNS من 20 إلى 60 مللي ثانية أخرى إذا لم تقم بتخزينه مؤقتاً. في المساعد الصوتي، يجعل هذا الاستجابة الأولى أبطأ بشكل ملحوظ من كل استجابة بعدها. أرسل طلب تسخين خفيف الوزن عند بدء تشغيل التطبيق، قبل أن يتحدث المستخدم.

قرارات البنية التحتية التي تقلل زمن الانتقال بغض النظر عن المنصة

اختيار واجهة برمجة التطبيقات مهم، ولكن طريقة استخدامك لها مهمة أيضاً. إليك بعض الأنماط التي تقلل زمن الانتقال المتصور في تطبيقات الوقت الفعلي:

تسخين الاتصال مسبقاً. يتضمن الطلب الأول لأي واجهة برمجة تطبيقات عبء مصافحة TCP (30-100 مللي ثانية) بالإضافة إلى حل DNS (20-60 مللي ثانية). قم بالتسخين المسبق عن طريق إرسال طلب عند بدء تشغيل التطبيق، وليس عندما يتحدث المستخدم لأول مرة. هذا واحد من أسهل مكاسب زمن الانتقال ولا يفعله أحد تقريباً بشكل افتراضي.

استخدم WebSocket للجلسات التفاعلية، ونقل HTTP المتقطع للاستجابات لمرة واحدة. يلغي WebSocket عبء HTTP لكل طلب وهو الخيار الصحيح عندما يكون لديك جلسة مستمرة. لحالات استخدام الاستجابة الواحدة - صوت إشعار، ميزة القراءة بصوت عالٍ - يكون نقل HTTP المتقطع (HTTP chunked transfer) أبسط ويعمل بشكل جيد.

تخزين العبارات الثابتة مؤقتاً. التحيات، التأكيدات، رسائل الخطأ، مطالبات التنقل. قم بتوليدها مرة واحدة وتقديمها من ذاكرة التخزين المؤقت. ستلغي مكالمات API تماماً لـ 30-50% من مخرجات الصوت في معظم التطبيقات المحادثة.

ابدأ البث فوراً. لا تنتظر ملف الصوت الكامل. إذا كانت واجهة برمجة التطبيقات الخاصة بك تدعم البث (والواجهات التي تستحق الاستخدام تدعمه)، فقم بتوجيه البث مباشرة إلى مخرج الصوت واتركه يعمل بينما يتم توليد الباقي.

طابق منطقة واجهة برمجة التطبيقات مع منطقة المستخدم. تضيف مكالمة TTS API من مستخدم في طوكيو إلى خادم في فرجينيا 150-200 مللي ثانية من زمن انتقال الشبكة الخام قبل بدء أي معالجة. هذه ليست مشكلة TTS - إنها مشكلة جغرافية. استخدم نقاط النهاية الإقليمية حيثما توفرت، وإذا كان مزودك لا يوفرها، فيمكن لـ CDN أو وكيل الحافة المساعدة.

الاستضافة الذاتية للأسقف الصارمة لزمن الانتقال. إذا كانت متطلبات تطبيقك أقل من 100 مللي ثانية حقاً، فلا توجد واجهة برمجة تطبيقات سحابية تقدم ذلك بشكل موثوق عبر شبكة عادية. الاستدلال المحلي باستخدام نموذج مفتوح المصدر مثل Fish Speech هو المسار المعماري الوحيد لهذا المستوى من الأداء.

مطابقة المنصة مع نوع التطبيق

المساعدات الصوتية والذكاء الاصطناعي الرفيق: البث بالإضافة إلى أقل TTFB ممكن أمر غير قابل للتفاوض. Fish Audio أو ElevenLabs، مع الاختبار في المنطقة التي يتواجد فيها مستخدموك.

استجابات صوت روبوتات الدردشة: 300-500 مللي ثانية مقبول عادةً. أي منصة قادرة على البث ستفي بالغرض. أعطِ الأولوية للتكلفة وجودة الصوت على زمن الانتقال الخام.

أنظمة IVR والهاتف: متطلبات زمن الانتقال أكثر مرونة (500 مللي ثانية - 1 ثانية مقبول). الموثوقية والتحكم في SSML أكثر أهمية. Azure أو Amazon Polly مناسبان هنا.

أصوات الإشعارات والتنبيهات: التوليد بالدفعات مع التخزين المؤقت جيد. لا يهم زمن الانتقال لأن الصوت يتم توليده مسبقاً. تتعامل الفئة المجانية من Google مع هذا بتكلفة فعالة.

الترجمة في الوقت الفعلي أو السرد المباشر: هذه هي الحالة الأصعب. البث بالإضافة إلى الاستدلال المحلي أو القريب من المحلي هو الطريقة الوحيدة لتحقيق زمن انتقال مقبول. استضافة Fish Speech ذاتياً أو استخدام واجهة برمجة تطبيقات Fish Audio من نقطة نهاية قريبة جغرافياً.

الأسئلة الشائعة

ما الذي يعتبر زمن انتقال منخفض لواجهة برمجة تطبيقات TTS في تطبيقات الوقت الفعلي؟ يُعتبر TTFB (وقت وصول أول بايت) الأقل من 300 مللي ثانية مقبولاً بشكل عام للمساعدات الصوتية والذكاء الاصطناعي المحادثي. أقل من 150 مللي ثانية يبدو فورياً. أكثر من 500 مللي ثانية يكسر إيقاع تبادل الأدوار الطبيعي في المحادثة. يضع TTFB الخاص بـ Fish Audio، والذي يصل لمستوى الملي ثانية، المنصة في أسرع فئة للنشر في الوقت الفعلي.

هل يقلل البث (Streaming) من زمن انتقال TTS؟ يقلل البث من زمن الانتقال المتصور بشكل كبير. إنه لا يغير إجمالي وقت التوليد، ولكنه يبدأ في تسليم الصوت قبل اكتمال التوليد. الاستجابة المكونة من 500 كلمة والتي تستغرق 8 ثوانٍ لتوليدها بالكامل تبدأ في العمل في أقل من 200 مللي ثانية مع البث. تجربة المستخدم هي البداية بـ 200 مللي ثانية، وليس الإكمال بـ 8 ثوانٍ.

هل يمكنني تقليل زمن انتقال TTS عن طريق استضافة النموذج ذاتياً؟ نعم. تلغي الاستضافة الذاتية رحلة الشبكة ذهاباً وإياباً تماماً. يمكن لنموذج Fish Speech مفتوح المصدر من Fish Audio العمل على بنيتك التحتية الخاصة. عادة ما يكون زمن انتقال الاستدلال على الأجهزة الحديثة أقل من 100 مللي ثانية للاستجابات القصيرة، وهو أقل مما يمكن لأي واجهة برمجة تطبيقات سحابية تقديمه باستمرار.

ما هي واجهة برمجة تطبيقات TTS التي تعمل بشكل أفضل للمساعدات الصوتية التي تحتاج إلى استجابات سريعة؟ بالنسبة لمعظم عمليات نشر المساعدات الصوتية، تغطي تركيبة Fish Audio بين TTFB بمستوى الملي ثانية والبث والتزامن العالي جميع المتطلبات. ElevenLabs هي البديل للمساعدات التي تركز على اللغة الإنجليزية حيث تكون جودة الصوت هي الاهتمام الأساسي.

كيف أختبر زمن انتقال TTS API بدقة؟ اختبر من المنطقة التي يتواجد فيها مستخدموك، وليس من جهاز التطوير الخاص بك. استخدم أحمال طلبات متزامنة تعكس ذروة حركة المرور المتوقعة. قم بقياس TTFB على وجه الخصوص - الوقت حتى يبدأ وصول الصوت، وليس إجمالي وقت الاستجابة. قم بإجراء الاختبارات في أوقات مختلفة من اليوم لالتقاط حمل الخادم المتغير. الرقم لمستخدم واحد على جهاز التطوير الخاص بك هو الحد الأقصى للأداء وليس الواقع.

هل يتغير زمن انتقال TTS تحت الحمل المتزامن العالي؟ نعم، بالنسبة للمنصات التي لا تصمم بنيتها للتزامن. تم تصميم دعم التزامن العالي في Fish Audio للحفاظ على TTFB ثابت تحت الحمل، وهو سمة الأداء ذات الصلة للتطبيقات التي تضم العديد من المستخدمين المتزامنين.

الخلاصة

بالنسبة للتطبيقات في الوقت الفعلي، يكون اختيار المنصة أبسط مما يبدو: فأنت بحاجة إلى واجهة برمجة تطبيقات TTS تدعم البث وتقدم TTFB أقل من 300 مللي ثانية من منطقة النشر الخاصة بك.

إن TTFB الخاص بـ Fish Audio على مستوى الملي ثانية، والبث الأصلي، والتزامن العالي، وخيار الاستضافة الذاتية مفتوح المصدر تمنحه أوسع نطاق من أنماط النشر للتطبيقات ذات الأهمية القصوى لزمن الانتقال. تعد ElevenLabs منافساً حقيقياً لحالات الاستخدام التي تركز على اللغة الإنجليزية حيث تكون جودة الصوت هي الأولوية القصوى - لا تتجاهلها دون اختبار.

قبل الالتزام بأي دمج، اختبر واجهة برمجة التطبيقات من المنطقة التي يتواجد فيها مستخدموك، وتحت الحمل المتزامن الذي تتوقعه فعلياً. أرقام زمن الانتقال من كمبيوتر المطور المحمول في بيئة مثالية لا تتنبأ بسلوك الإنتاج. هذا ليس تحذيراً نظرياً - إنه وضع الفشل المحدد الذي تسبب في فشل دمج TTS أكثر من أي مشكلة تتعلق بجودة واجهة برمجة التطبيقات.

ابدأ بالوثائق في docs.fish.audio واختبر تسليم البث مباشرة مقابل عتبة زمن الانتقال لتطبيقك.

Kyle is a Founding Engineer at Fish Audio and UC Berkeley Computer Scientist and Physicist. He builds scalable voice systems and grew Fish into the #1 global AI text-to-speech platform. Outside of startups, he has climbed 1345 trees so far around the Bay Area. Find his irresistibly clouty thoughts on X at @kile_sway.

اقرأ المزيد من Kyle Cui