Melhores Geradores de Voz por IA em 2026: O que Realmente Parece Humano (e o que Não Parece)

Duzentas vozes. Trinta idiomas. Latência inferior a 300ms. Cada ficha técnica de gerador de voz por IA parece escrita pela mesma equipe de marketing. Os números diferem apenas o suficiente para preencher uma tabela de comparação, mas não respondem à pergunta que realmente importa: esta ferramenta ainda soa humana na marca dos dois minutos ou ela se achata gradualmente em uma máquina lendo seu script?

Isso não é algo que uma página de recursos possa lhe dizer. É algo que seus ouvidos detectam nos primeiros 90 segundos de uma leitura de produção real.

A Maioria das Listas de Comparação Classifica com Base nos Critérios Errados

Percorra dez artigos sobre o "melhor gerador de voz por IA" e verá os mesmos critérios repetidos: número de vozes, número de idiomas, preço por mês. Essas métricas são fáceis de quantificar, e é precisamente por isso que dominam as tabelas de comparação. O problema é que elas não prevêem com segurança se uma ferramenta terá um bom desempenho no seu trabalho.

A consistência em longa duração é o que mais importa primeiro. Uma voz que soa calorosa por duas frases pode tornar-se monótona no terceiro parágrafo. O ritmo achata. A variação emocional desaparece. Você acaba com um áudio que tecnicamente entrega as palavras, mas carece de presença humana. Nenhuma ficha técnica captura isso.

O manuseio de idiomas mistos é o segundo ponto cego. Se o seu script insere o nome de um produto em espanhol em uma frase em inglês ou alterna entre inglês e mandarim, muitos geradores têm dificuldade. Você pode ouvir quebras de ritmo, sílabas mal pronunciadas ou mudanças bruscas de sotaque.

A granularidade da emoção é a terceira lacuna. Muitas ferramentas oferecem "feliz" ou "triste" como predefinições. Um anúncio de produto requer um entusiasmo controlado, não um locutor exagerado. Um tutorial precisa de autoridade calma, não de uma narração teatral. A diferença entre "tem controles de emoção" e "controles de emoção que soam naturais" é onde surgem as reais diferenças de desempenho.

7 Geradores de Voz por IA, Classificados pelo que Acontece Após a Demonstração

Após testar cada plataforma com o mesmo script de 800 palavras em inglês, mandarim e espanhol, eis como elas se saíram sob condições reais de produção:

| Ferramenta | Qualidade de Voz (Longa Duração) | Controle de Emoção | Multilíngue | Latência de API | Preço Inicial |

|---|---|---|---|---|---|

| Fish Audio | Mais natural, consistente em vários minutos | Tags de emoção granulares | Mais de 80 idiomas, SOTA entre idiomas | Streaming inferior a 300ms | Grátis / US$ 11/mês Plus |

| ElevenLabs | Forte em formatos curtos, pode exagerar em longos | Bom, precisa de ajustes | 32 idiomas, mais fraco em scripts mistos | Rápido | Grátis / US$ 5/mês Starter |

| Play.ht | Limpo e estável | Limitado | Mais de 20 idiomas | Moderado | Nível grátis disponível |

| Resemble AI | Boa expressividade | Prompts de emoção | Alcance moderado | Moderado | Pagamento por uso |

| WellSaid Labs | Profissional, consistente | Granular ao nível da palavra | Focado em Inglês | Rápido | US$ 50/mês |

| Murf AI | Sólido para corporativo | Básico | Mais de 20 idiomas | Moderado | US$ 19/mês |

| LOVO (Genny) | Expressivo, focado em criadores | Baseado em emoções | Mais de 100 idiomas | Moderado | Nível grátis disponível |

Essa tabela fornece uma visão geral rápida. Os detalhes abaixo explicam o porquê da classificação.

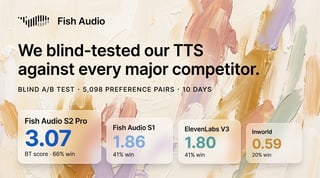

A Ferramenta de US 99

O Fish Audio não soa como o que você esperaria de uma plataforma que cobra US$ 11 por mês. Nos testes, ele produziu a clonagem de voz com som mais natural que já ouvimos, variando consistentemente a emoção em scripts de vários minutos sem cair no tom robótico e plano que assombra a maioria dos geradores além da marca dos 90 segundos. O modelo S2 atualmente ocupa o 1º lugar com base em classificações ELO e benchmarks independentes, e a diferença é audível em trabalhos de produção reais.

Quatro diferenciais se destacaram:

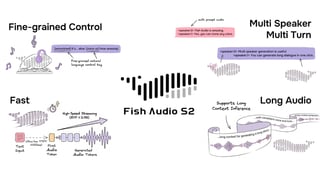

- O sistema de emoções mais expressivo e controlável disponível. Em vez de controles deslizantes estáticos, você insere tags como (cheerful), (serious), (whispering) ou (thoughtful) diretamente no script. A entrega muda naturalmente dentro da mesma tomada. O nível de granularidade aqui supera o ElevenLabs e todas as outras ferramentas que testamos; você não está escolhendo entre um punhado de predefinições, você está dirigindo a performance. Para conteúdos que transitam de uma explicação para uma chamada à ação, essa flexibilidade é mais importante do que a contagem bruta de vozes.

- Desempenho multilíngue que não falha em scripts mistos. Quando um script mistura terminologia em inglês e chinês, o ritmo e a pronúncia permaneceram estáveis sem extensas correções fonéticas. O Fish Audio suporta mais de 80 idiomas, e as transições entre idiomas soam como as de um falante bilíngue, em vez de dois modelos costurados. A clonagem de voz também funciona de forma multilíngue: clone uma voz a partir de uma amostra em inglês e ela falará mandarim com o mesmo timbre natural.

- API inferior a 300ms com preços de tarifa fixa. A API do Fish Audio oferece tempos de resposta de streaming rápidos o suficiente para IA conversacional em tempo real e conteúdo interativo. A estrutura de tarifa fixa simplifica o orçamento em comparação com sistemas baseados em créditos. O modelo S2 é de pesos abertos, construído sobre o mecanismo de inferência SGLang, de modo que os desenvolvedores que precisam de implantação auto-hospedada têm essa opção (licença comercial necessária).

- Biblioteca de mais de 2.000.000 de vozes e clonagem em 15 segundos. O recurso de clonagem de voz precisa de apenas 15 segundos de áudio de amostra para produzir um clone que soa mais próximo do falante original do que qualquer ferramenta concorrente que testamos. Para criadores que constroem vozes de marca ou desenvolvedores que prototipam diálogos de personagens, isso reduz o atrito de configuração a quase zero.

Além do TTS, o Fish Audio também oferece STT (fala para texto), geração de SFX e um removedor de voz, tornando-o um kit de ferramentas de áudio mais completo do que a maioria das plataformas apenas de TTS.

O nível gratuito permite testes significativos de fluxo de trabalho. O [plano Plus a US 75/mês suporta produção de maior volume.

Onde o ElevenLabs Vence (e Onde Não Vence)

O ElevenLabs conquistou sua reputação por um motivo. A qualidade da voz em conteúdos de formato curto, particularmente narrações em inglês, está entre as mais fortes disponíveis. As vozes transmitem nuances emocionais genuínas, e o recurso de clonagem instantânea de voz produz resultados impressionantes com o mínimo de áudio de origem.

Dito isso, gravações mais longas podem suscitar emoções mais fortes do que o script exige. Uma descrição neutra de produto pode incluir pausas dramáticas e mudanças de intensidade que parecem mais uma narração de audiolivro do que um tutorial. Você pode ajustar isso, mas exige iteração, e cada iteração custa créditos. Em comparação direta, as tags de emoção do Fish Audio oferecem um controle mais preciso sobre a entrega sem o ciclo de tentativa e erro.

O preço é o outro ponto crítico. O ElevenLabs usa um modelo de crédito por caractere que varia de acordo com o modelo de voz, portanto, prever os custos mensais exige alguns cálculos:

- Starter: US$ 5/mês, 30.000 créditos (~10 minutos de áudio)

- Creator: US$ 22/mês, 100.000 créditos

- Pro: US$ 99/mês, 500.000 créditos

Para equipes que produzem conteúdo diário, os custos escalam rapidamente, especialmente ao regenerar várias tomadas. A aproximadamente US 165 do ElevenLabs, a vantagem de preço do Fish Audio torna-se significativa em larga escala.

Para projetos apenas em inglês, nos quais a expressividade é a prioridade máxima e o orçamento é flexível, o ElevenLabs é uma opção forte. Para trabalhos multilíngues ou produções sensíveis ao custo, a equação de valor muda.

A Escolha Corporativa vs. A Escolha para Criadores

WellSaid Labs e Murf AI representam extremidades diferentes do espectro de mercado, o que os torna dignos de comparação.

O WellSaid Labs visa equipes corporativas que exigem governança, conformidade SOC 2 e controle de pronúncia ao nível da palavra. As vozes soam profissionais e consistentes. O painel de Cues permite o ajuste de ênfase em palavras individuais, o que é útil para materiais de treinamento e com foco em conformidade. Começando em US$ 50 por usuário por mês, sem nível gratuito, o preço é voltado para organizações em vez de criadores individuais.

O Murf AI adota a abordagem oposta. A interface é simples o suficiente para que alguém sem experiência em produção de áudio gere uma narração utilizável em minutos. Ele integra TTS com uma linha do tempo de edição de vídeo integrada, permitindo que os usuários sincronizem a narração com os recursos visuais sem alternar entre plataformas. A US$ 19/mês, está posicionado para profissionais de marketing, educadores e pequenas equipes que precisam de resultados funcionais rapidamente. A qualidade da voz é sólida, mas não excepcional, particularmente para scripts mais longos ou emocionalmente complexos.

Cada ferramenta se destaca em seu nicho pretendido, embora existam compensações entre qualidade, profundidade multilíngue e eficiência de preço. Mas se a sua necessidade primária são ferramentas de conformidade corporativa, o WellSaid foi construído para isso. Se você precisa de uma interface extremamente simples e não se importa com acesso à API, o Murf reduz o atrito.

5 Coisas que Quebram a Maioria das Vozes de IA (e o que Observar)

Antes de se comprometer com qualquer plataforma, teste-a usando seus próprios scripts, não amostras de marketing.

- A regra dos dois minutos. Gere pelo menos dois minutos de fala contínua. Ouça o desvio de ritmo, o achatamento emocional ou pausas não naturais que não estão presentes no seu script. Muitas ferramentas que soam bem aos 15 segundos revelam fraquezas aqui.

- Scripts de idiomas mistos. Insira um nome de produto estrangeiro, um acrônimo técnico ou uma frase em outro idioma. Se a voz tropeçar ou mudar o sotaque no meio da frase, espere problemas recorrentes de produção.

- Sussurro e ênfase. Peça para a voz sussurrar uma frase e depois entregue a próxima com ênfase. Vozes que lidam bem com o alcance dinâmico tendem a lidar bem com todo o resto também.

- Números e datas. Forneça à ferramenta um script contendo quantias em dólares, porcentagens e datas. A pronúncia de "US$ 4,5 bilhões" ou "14 de fevereiro de 2026" varia drasticamente entre as plataformas, e erros aqui prejudicam a credibilidade.

- Consistência na regeneração. Gere o mesmo script várias vezes. Se o tom e o ritmo variarem significativamente entre os resultados, você poderá gastar mais tempo audicionando tomadas do que produzindo conteúdo. A consistência geralmente importa mais do que o pico de expressividade.

Quem Deve Usar o Quê: Correspondendo Ferramentas a Fluxos de Trabalho

A ferramenta certa depende do que você está realmente construindo, não de qual plataforma tem mais recursos em uma ficha técnica.

- Criadores de conteúdo (YouTube, podcasts, social, multilíngue): O Fish Audio oferece a combinação mais forte de naturalidade de voz, controle de emoção e suporte multilíngue a um preço que não consome seu orçamento de produção. O STT integrado, a geração de SFX e o removedor de voz significam que você pode lidar com a maior parte do seu fluxo de trabalho de áudio sem trocar de plataforma. O recurso Story Studio suporta projetos de formato longo, como audiolivros, com saída pronta para ACX.

- Desenvolvedores que integram voz em aplicativos ou produtos: A API do Fish Audio fornece a latência e o desempenho de streaming necessários para casos de uso em tempo real, com documentação clara e preços de tarifa fixa que simplificam o orçamento. O modelo S2 de pesos abertos também pode ser auto-hospedado via SGLang para equipes que precisam de controle total. A API do ElevenLabs também é capaz, embora o modelo baseado em créditos adicione complexidade em larga escala.

- Equipes corporativas que priorizam conformidade e governança: O WellSaid Labs é desenvolvido especificamente para SOC 2, fluxos de trabalho auditáveis e controle ao nível da palavra, com o preço correspondente.

- Profissionais de marketing individuais ou educadores que precisam de uma narração rápida sem tocar em uma API: O editor visual do Murf AI leva você do script ao resultado com o mínimo de atrito.

Conclusão

Os geradores de voz por IA em 2026 evoluíram de uma novidade para uma infraestrutura de produção. A lacuna entre as principais plataformas e o resto não é sobre quem soa melhor em uma demonstração de 15 segundos. É sobre quem aguenta dois minutos, quem lida com seus scripts reais sem quebrar e quem define o preço do serviço de uma forma que faça sentido para o seu volume.

O Fish Audio cumpre consistentemente os três requisitos. A clonagem de voz mais natural do mercado, o sistema de emoções mais expressivo e controlável, mais de 80 idiomas com clonagem real entre idiomas e preços inferiores a US$ 15 por milhão de caracteres o tornam a escolha geral mais forte para criadores e desenvolvedores que precisam de resultados de voz prontos para produção sem orçamentos de nível corporativo. Teste-o com seus próprios scripts. Essa é a única comparação que importa.

Kyle is a Founding Engineer at Fish Audio and UC Berkeley Computer Scientist and Physicist. He builds scalable voice systems and grew Fish into the #1 global AI text-to-speech platform. Outside of startups, he has climbed 1345 trees so far around the Bay Area. Find his irresistibly clouty thoughts on X at @kile_sway.

Leia mais de Kyle Cui