How to Turn On Speech to Text and Start Dictating on Any Device

Most people type at 40 words per minute. Most people speak at 130. That's a 3x gap you're leaving on the table every time you thumb-type a message, hunt-and-peck through an email, or transcribe meeting notes by hand after the fact.

Speech to text, also called dictation or voice typing, converts your spoken words into written text in real time. Every major device has it built in. Turning it on is simple. Getting accurate results takes knowing a few things the setup screen doesn't tell you.

Windows 10 and 11

Windows has two speech-to-text tools. Voice Typing is the lightweight dictation tool. Windows Speech Recognition is the older, more comprehensive system.

Enabling Voice Typing

Voice Typing is the faster option and the one Microsoft actively maintains. It works in any text field across the system.

- Press Win + H to open the Voice Typing toolbar. A small microphone panel appears at the top of your screen

- Click the microphone icon or press Win + H again to start dictating

- Speak naturally. Windows transcribes in real time and inserts text at your cursor position

First-time setup notes:

- Microphone permission: Windows may prompt you to grant microphone access. Allow it. Without this, Voice Typing silently fails

- Online speech recognition: For better accuracy, make sure online speech recognition is enabled under Settings > Privacy & Security > Speech. The cloud-based model is significantly more accurate than the offline fallback

- Auto-punctuation: Voice Typing can insert periods, commas, and question marks automatically. Toggle this on via the gear icon on the Voice Typing toolbar

Voice commands you can speak while dictating:

- "Period," "comma," "question mark," "exclamation point" to insert punctuation

- "New line" or "new paragraph" to create line breaks

- "Delete that" to remove the last phrase

- "Stop dictation" to turn off the microphone

Windows Speech Recognition

The older Speech Recognition tool offers broader control, including voice commands for navigating Windows, opening apps, and clicking buttons. It's more powerful but more complex.

- Open Settings > Accessibility > Speech (Windows 11) or search "Windows Speech Recognition" in the Start menu

- Follow the setup wizard, which includes a microphone calibration step and a brief voice training exercise

For pure dictation, Voice Typing is the better choice. Windows Speech Recognition is worth exploring if you want hands-free control of your entire computer.

macOS

macOS offers Dictation as a system-wide speech-to-text feature and Enhanced Dictation for offline use.

Enabling Dictation

- Open System Settings > Keyboard

- Scroll to the Dictation section and toggle it on

- macOS will ask you to confirm and may download a language model

Once enabled, press the microphone key on your keyboard (on newer Macs) or press Fn twice (or whatever shortcut you configure) to start dictating in any text field.

Configuration worth checking:

- Language: Click the language dropdown to add additional dictation languages. macOS supports multiple simultaneous languages, and the engine auto-detects which one you're speaking

- Auto-punctuation: Toggle on to let macOS insert periods, commas, and question marks based on your pacing and intonation

- Shortcut: Customize the activation shortcut under the Dictation settings if double-pressing Fn feels awkward

macOS Dictation sends audio to Apple's servers for processing by default. On Apple Silicon Macs running macOS Ventura or later, on-device processing is available for supported languages, keeping your audio local.

Voice Control

Voice Control is macOS's full voice-command system. It goes beyond dictation to let you navigate, click, scroll, and edit using spoken commands.

- Open System Settings > Accessibility > Voice Control and toggle on

Voice Control uses on-device processing exclusively and works offline. It's designed primarily for accessibility users who need complete hands-free operation, but writers and power users sometimes adopt it for its precise editing commands like "select previous sentence" or "capitalize that."

iPhone and iPad

iOS has had dictation built in since 2011. The accuracy has improved dramatically, especially on devices with Apple's Neural Engine.

Enabling Dictation

- Go to Settings > General > Keyboard

- Toggle on Enable Dictation

- Confirm when prompted

To use it, open any app with a text field and tap the microphone icon on the keyboard. Start speaking. Tap the microphone again or the keyboard icon to stop.

On iPhone and iPad running iOS 16 or later, dictation and keyboard input work simultaneously. You can speak a sentence, then manually correct a word with the keyboard, then continue speaking, all without toggling modes. This hybrid input is one of the most underrated productivity features on iOS.

Useful details:

- Emoji by voice: Say "heart emoji" or "thumbs up emoji" and iOS inserts the corresponding emoji

- Punctuation: Speak "period," "comma," "question mark," "exclamation point," or "new paragraph" naturally within your sentence

- Language switching: If you have multiple keyboards installed, dictation auto-detects the language you're speaking in most cases

- On-device processing: iPhone models with A12 Bionic or later handle dictation on-device for supported languages, meaning your audio doesn't leave the phone

Android

Android's speech-to-text is powered by Google's voice recognition engine and works system-wide through Gboard or most other keyboard apps.

Enabling Voice Typing in Gboard

Gboard is the default keyboard on most Android phones. Voice typing is typically enabled by default, but here's how to verify and configure it:

- Open Settings > System > Languages & Input > On-Screen Keyboard > Gboard

- Tap Voice Typing and make sure it's toggled on

- Alternatively, just open any text field and look for the microphone icon on the Gboard toolbar. Tap it to start dictating

On Samsung devices using Samsung Keyboard:

- Open Settings > General Management > Samsung Keyboard Settings

- Tap Voice Input and select your preferred speech engine

Key settings to adjust:

- Offline speech recognition: Under Gboard settings, go to Voice Typing > Offline Speech Recognition to download language packs for use without the internet. Offline accuracy is lower but eliminates latency

- Auto-punctuation: Usually on by default in Gboard. The engine adds periods at natural pauses and occasionally inserts commas

- Voice match: If accuracy seems poor, retrain your voice model under Settings > Google > Settings for Google Apps > Search, Assistant & Voice > Voice > Voice Match

Google Assistant Dictation

For quick text input, you can also say "Hey Google, type..." followed by your message in apps that support Assistant integration. This is faster for short messages but less practical for extended dictation.

Chromebook

ChromeOS supports dictation through its built-in accessibility features and through Google's speech engine in web apps.

Enabling Dictation

- Go to Settings > Accessibility > Keyboard and Text Input

- Toggle on Enable Dictation

- A small microphone icon appears in the system tray. Click it to start dictating in any text field

ChromeOS dictation uses the same Google speech engine as Android. Accuracy, language support, and voice commands are nearly identical.

Using Voice Typing in Google Docs

If you primarily work in Google Docs, there's a separate voice typing tool built into the app:

- Open a Google Doc

- Go to Tools > Voice Typing or press Ctrl + Shift + S

- Click the microphone icon that appears in the left margin and start speaking

Google Docs Voice Typing supports over 100 languages and includes voice commands for formatting: "bold," "italics," "create bulleted list," "heading 2," and more. For document-heavy work on a Chromebook, this is often more capable than the system-level dictation.

Why Accuracy Drops After the First Sentence

You turned on speech to text, spoke a sentence, and it worked. Then you tried dictating a full paragraph and the result was a mess. Missed words, wrong homophones, punctuation in the wrong places.

This is the most common experience, and the cause usually isn't the speech engine. It's how people speak when they're dictating for the first time.

Natural conversation includes filler words, false starts, mid-sentence corrections, and trailing-off thoughts. Your brain auto-corrects all of this when another human is listening. A speech-to-text engine transcribes everything literally, including every "um," "uh," "actually wait," and half-finished thought.

Three adjustments that improve accuracy immediately:

- Finish your thought before you speak it. Pause for a beat, form the complete sentence in your head, then say it. This single habit eliminates most transcription errors

- Speak punctuation explicitly until auto-punctuation catches up. Say "comma" and "period" out loud. It feels awkward for about five minutes, then becomes automatic

- Dictate in short bursts, not streams. Speak 2-3 sentences, pause, review, then continue. Long unbroken streams overwhelm the engine's buffer and increase error rates

Built-in speech-to-text engines handle these adjustments well for short messages and quick notes. For longer content like meeting transcriptions, interviews, lecture recordings, or podcast scripts, the accuracy demands go up and the built-in tools start showing their limits.

When Built-In Dictation Hits Its Ceiling

Device-level speech to text is designed for real-time, short-form input. You speak, it transcribes, you correct errors manually, and you move on. For a text message or a search query, that's enough.

The workflow breaks down in a few specific scenarios:

- Long-form transcription: Dictating a 2,000-word article means correcting errors every few sentences. The interruptions kill the speed advantage that made dictation appealing in the first place

- Pre-recorded audio: Built-in dictation requires live microphone input. It can't transcribe an audio file, a meeting recording, or a podcast episode

- Multiple speakers: Device dictation doesn't distinguish between voices. In a meeting or interview, everything gets merged into a single undifferentiated text stream

- Specialized vocabulary: Medical terms, legal jargon, technical product names, and non-English words trigger frequent misrecognitions that auto-correct makes worse

These aren't edge cases. They're the scenarios where speech to text delivers the most value, and they're exactly where built-in tools fall short.

AI Speech to Text for Audio Files, Meetings, and Extended Transcription

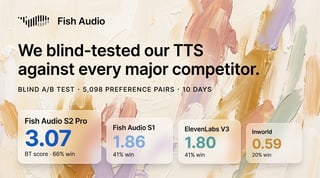

Fish Audio's Speech to Text takes a different approach. Instead of real-time microphone-only dictation, it processes audio files and generates high-accuracy transcriptions using neural models trained on diverse speech patterns.

What that means in practice:

What that means in practice:

- Upload any audio file: MP3, WAV, M4A, and other standard formats. Record a meeting, a lecture, an interview, or a podcast episode and get a text transcription without typing a word

- Multi-language support: The engine handles a wide range of languages and can process audio where speakers switch between languages mid-conversation

- Higher accuracy on extended content: Where built-in dictation degrades over long passages, Fish Audio's STT model maintains consistency across minutes or hours of audio. The neural architecture is designed for sustained transcription, not just short bursts

- No microphone required: You don't need to speak into your device in real time. Upload a recording from any source and get the transcript back

For content creators, journalists, researchers, and anyone who regularly converts spoken words into written text, the workflow shifts from "dictate and constantly fix errors" to "record naturally, then transcribe the whole thing at once."

API Access for Developers

If you're building an application that needs speech-to-text capability, Fish Audio's API provides programmatic access to the same transcription engine. Use cases include:

- Meeting tools: Automatic transcription of conference calls

- Accessibility features: Real-time captioning for video platforms

- Content pipelines: Batch transcription of podcast episodes or video narration

- Voice interfaces: Converting user speech into actionable text within apps

The API supports streaming for real-time applications and batch processing for pre-recorded files. Details and pricing at fish.audio/plan.

Conclusion

Speech to text is available on every major platform. Win + H on Windows, Fn Fn on Mac, the microphone icon on iPhone and Android, and the system tray mic on Chromebook. Turning it on takes seconds, and for quick messages and short notes, built-in dictation works well enough.

For anything longer, the built-in tools introduce a correction overhead that erases the speed advantage. If you're transcribing recordings, processing meetings, or converting extended audio into text, Fish Audio's Speech to Text handles the workload that device-level dictation wasn't built for. Upload, transcribe, done.

Kyle is a Founding Engineer at Fish Audio and UC Berkeley Computer Scientist and Physicist. He builds scalable voice systems and grew Fish into the #1 global AI text-to-speech platform. Outside of startups, he has climbed 1345 trees so far around the Bay Area. Find his irresistibly clouty thoughts on X at @kile_sway.

Mehr von Kyle Cui lesen