Top AI Voice Generators in 2026: What Actually Sounds Human (and What Doesn't)

Two hundred voices. Thirty languages. Sub-300ms latency. Every AI voice generator spec sheet reads like it was written by the same marketing team. The numbers differ just enough to fill a comparison table, but they don't answer the question that actually matters: does this tool still sound human at the two-minute mark, or does it gradually flatten into a machine reading your script?

That's not something a features page can tell you. It's something your ears detect within the first 90 seconds of a real production read.

Most Comparison Lists Rank on the Wrong Things

Scroll through ten "best AI voice generator" articles, and you'll see the same criteria repeated: number of voices, number of languages, price per month. Those metrics are easy to quantify, which is precisely why they dominate comparison tables. The problem is that they don't reliably predict whether a tool will perform well in your work.

Long-form consistency matters first. A voice that sounds warm for two sentences can drift into monotone by paragraph three. Pacing flattens. Emotional variation fades. You end up with audio that technically delivers the words but lacks human presence. No spec sheet captures that.

Mixed-language handling is the second blind spot. If your script drops a Spanish product name into an English sentence or switches between English and Mandarin, many generators struggle. You may hear rhythm breaks, mispronounced syllables, or abrupt accent shifts.

Emotion granularity is the third gap. Many tools offer "happy" or "sad" as presets. A product announcement requires controlled enthusiasm, not exaggerated barker. A tutorial needs calm authority, not theatrical narration. The difference between "has emotion controls" and "emotion controls that sound natural" is where real performance differences emerge.

7 AI Voice Generators, Ranked by What Happens After the Demo

After testing each platform with the same 800-word script across English, Mandarin, and Spanish, here's how they performed under real production conditions:

| Tool | Voice Quality (Long-form) | Emotion Control | Multilingual | API Latency | Starting Price |

|---|---|---|---|---|---|

| Fish Audio | Most natural, consistent across minutes | Granular emotion tags | 80+ languages, SOTA cross-language | Sub-300ms streaming | Free / $11/month Plus |

| ElevenLabs | Strong short-form, can over-emote long-form | Good, needs tuning | 32 languages, weaker on mixed scripts | Fast | Free / $5/month Starter |

| Play.ht | Clean and steady | Limited | 20+ languages | Moderate | Free tier available |

| Resemble AI | Good expressiveness | Emotion prompts | Moderate range | Moderate | Pay-as-you-go |

| WellSaid Labs | Professional, consistent | Granular word-level | English-focused | Fast | $50/month |

| Murf AI | Solid for corporate | Basic | 20+ languages | Moderate | $19/month |

| LOVO (Genny) | Expressive, creator-focused | Emotion-based | 100+ languages | Moderate | Free tier available |

That table provides a quick overview. The details below explain why the ranking appears as it does.

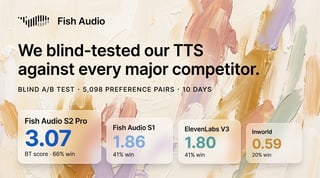

The 99 Plans

Fish Audio doesn't sound like what you'd expect from a platform charging $11 per month. In testing, it produced the most natural-sounding voice cloning we've heard, consistently varying emotion across multi-minute scripts without drifting into the flat, robotic tone that plagues most generators beyond the 90-second mark. The S2 model currently ranks #1 based on ELO ratings and independent benchmarks, and the difference is audible in real production work.

Four differentiators stood out:

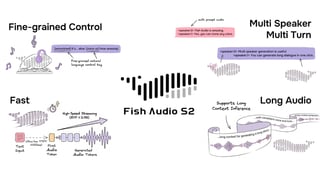

- The most expressive and controllable emotion system available. Instead of static sliders, you insert tags like (cheerful), (serious), (whispering), or (thoughtful) directly into the script. The delivery shifts naturally within the same take. The level of granularity here surpasses ElevenLabs and every other tool we tested; you're not choosing from a handful of presets, you're directing the performance. For content that transitions from explanation to call to action, this flexibility is more important than raw voice count.

- Multilingual performance that doesn't break on mixed scripts. When a script mixes English and Chinese terminology, rhythm and pronunciation remained stable without extensive phonetic correction. Fish Audio supports over 80 languages, and the cross-language transitions sound like a bilingual speaker rather than two models stitched together. Voice cloning works cross-lingually too: clone a voice from an English sample, and it speaks Mandarin with the same natural timbre.

- Sub-300ms API with flat-rate pricing. Fish Audio's API delivers streaming response times fast enough for real-time conversational AI and interactive content. The flat-rate structure simplifies budgeting compared to credit-based systems. The S2 model is open-weights, built on the SGLang inference engine, so developers who need self-hosted deployment have that option (commercial license required).

- 2,000,000+ voice library and 15-second cloning. The voice cloning feature needs only 15 seconds of sample audio to produce a clone that sounds closer to the original speaker than any competing tool we tested. For creators building brand voices or developers prototyping character dialogue, this reduces setup friction to near zero.

Beyond TTS, Fish Audio also offers STT (speech-to-text), SFX generation, and a vocal remover, making it a more complete audio toolkit than most TTS-only platforms.

The free tier allows meaningful workflow testing. The [Plus plan at 75/month supports higher-volume production.

Where ElevenLabs Wins (and Where It Doesn't)

ElevenLabs earned its reputation for a reason. The voice quality on short-form content, particularly English narration, is among the strongest available. Voices convey genuine emotional nuance, and the instant voice-cloning feature produces impressive results from minimal source audio.

That said, longer recordings can elicit emotion more strongly than the script calls for. A neutral product description might include dramatic pauses and intensity shifts that read more like audiobook narration than a tutorial. You can tune this down, but it takes iteration, and iteration costs credits. In direct comparison, Fish Audio's emotion tags give you more precise control over delivery without the trial-and-error loop.

Pricing is the other sticking point. ElevenLabs uses a credit-per-character model that varies by voice model, so forecasting monthly costs requires some calculation:

- Starter: $5/month, 30,000 credits (~10 minutes of audio)

- Creator: $22/month, 100,000 credits

- Pro: $99/month, 500,000 credits

For teams producing daily content, costs escalate quickly, especially when regenerating multiple takes. At roughly 165, Fish Audio's pricing advantage becomes significant at scale.

For English-only projects in which expressiveness is the top priority and the budget is flexible, ElevenLabs is a strong option. For multilingual work or cost-sensitive production, the value equation shifts.

The Enterprise Pick vs. The Creator Pick

WellSaid Labs and Murf AI represent different ends of the market spectrum, making them worth comparing.

WellSaid Labs targets enterprise teams that require governance, SOC 2 compliance, and word-level pronunciation control. Voices sound professional and consistent. The Cues panel allows adjustment of emphasis on individual words, which is useful for training and compliance-heavy material. Starting at $50 per user per month, with no free tier, it's priced for organizations rather than solo creators.

Murf AI takes the opposite approach. The interface is simple enough for someone with no audio production background to generate a usable voiceover in minutes. It integrates TTS with a built-in video-editing timeline, allowing users to sync narration to visuals without switching platforms. At $19/month, it's positioned for marketers, educators, and small teams needing functional output quickly. Voice quality is solid but not exceptional, particularly for longer or emotionally complex scripts.

Each tool excels in its intended niche, though trade-offs exist across quality, multilingual depth, and price efficiency. But if your primary need is enterprise compliance tooling, WellSaid is built for that. If you need a dead-simple interface and don't care about API access, Murf reduces friction.

5 Things That Break Most AI Voices (and What to Listen For)

Before you commit to any platform, test it using your own scripts, not marketing samples.

- The two-minute rule. Generate at least two minutes of continuous speech. Listen for pacing drift, emotional flattening, or unnatural pauses not present in your script. Many tools that sound great at 15 seconds reveal weaknesses here.

- Mixed-language scripts. Insert a foreign product name, technical acronym, or code-switched phrase. If the voice stumbles or shifts accent mid-sentence, expect recurring production issues.

- Whisper and emphasis. Ask the voice to whisper a line, then deliver the next line with emphasis. Voices that handle dynamic range well tend to handle everything else well, too.

- Numbers and dates. Provide the tool with a script containing dollar amounts, percentages, and dates. Pronunciation of "$4.5 billion" or "February 14, 2026" varies wildly across platforms, and errors here undermine credibility.

- Regeneration consistency. Generate the same script multiple times. If tone and pacing vary significantly between outputs, you may spend more time auditioning takes than producing content. Consistency often matters more than peak expressiveness.

Who Should Use What: Matching Tools to Workflows

The right tool depends on what you're actually building, not which platform has the most features on a spec sheet.

- Content creators (YouTube, podcasts, social, multilingual): Fish Audio gives you the strongest combination of voice naturalness, emotion control, and multilingual support at a price that doesn't eat your production budget. The built-in STT, SFX generation, and vocal remover mean you can handle most of your audio workflow without switching platforms. The Story Studio feature supports long-form projects such as audiobooks with ACX-ready output.

- Developers building voice into applications or products: Fish Audio's API provides the latency and streaming performance required for real-time use cases, with clear documentation and flat-rate pricing that simplifies budgeting. The open-weights S2 model can also be self-hosted via SGLang for teams that need full control. ElevenLabs' API is also capable, though the credit-based model adds complexity at scale.

- Enterprise teams prioritizing compliance and governance: WellSaid Labs is purpose-built for SOC 2, auditable workflows, and word-level control, with the pricing to match.

- Solo marketers or educators who need a quick voiceover without touching an API: Murf AI's visual editor gets you from script to output with minimal friction.

Conclusion

AI voice generators in 2026 have evolved from novelty to production infrastructure. The gap between the top platforms and the rest isn't about who sounds best in a 15-second demo. It's about who holds up at two minutes, who handles your actual scripts without breaking, and who prices the service in a way that makes sense for your volume.

Fish Audio consistently delivers on all three. The most natural voice cloning on the market, the most expressive and controllable emotion system, 80+ languages with real cross-lingual cloning, and sub-$15 pricing per million characters make it the strongest overall pick for creators and developers who need production-ready voice output without enterprise-tier budgets. Test it with your own scripts. That's the only comparison that matters.

Kyle is a Founding Engineer at Fish Audio and UC Berkeley Computer Scientist and Physicist. He builds scalable voice systems and grew Fish into the #1 global AI text-to-speech platform. Outside of startups, he has climbed 1345 trees so far around the Bay Area. Find his irresistibly clouty thoughts on X at @kile_sway.

Kyle Cuiの他の記事を読む